AI in Sales and Marketing: Real Examples

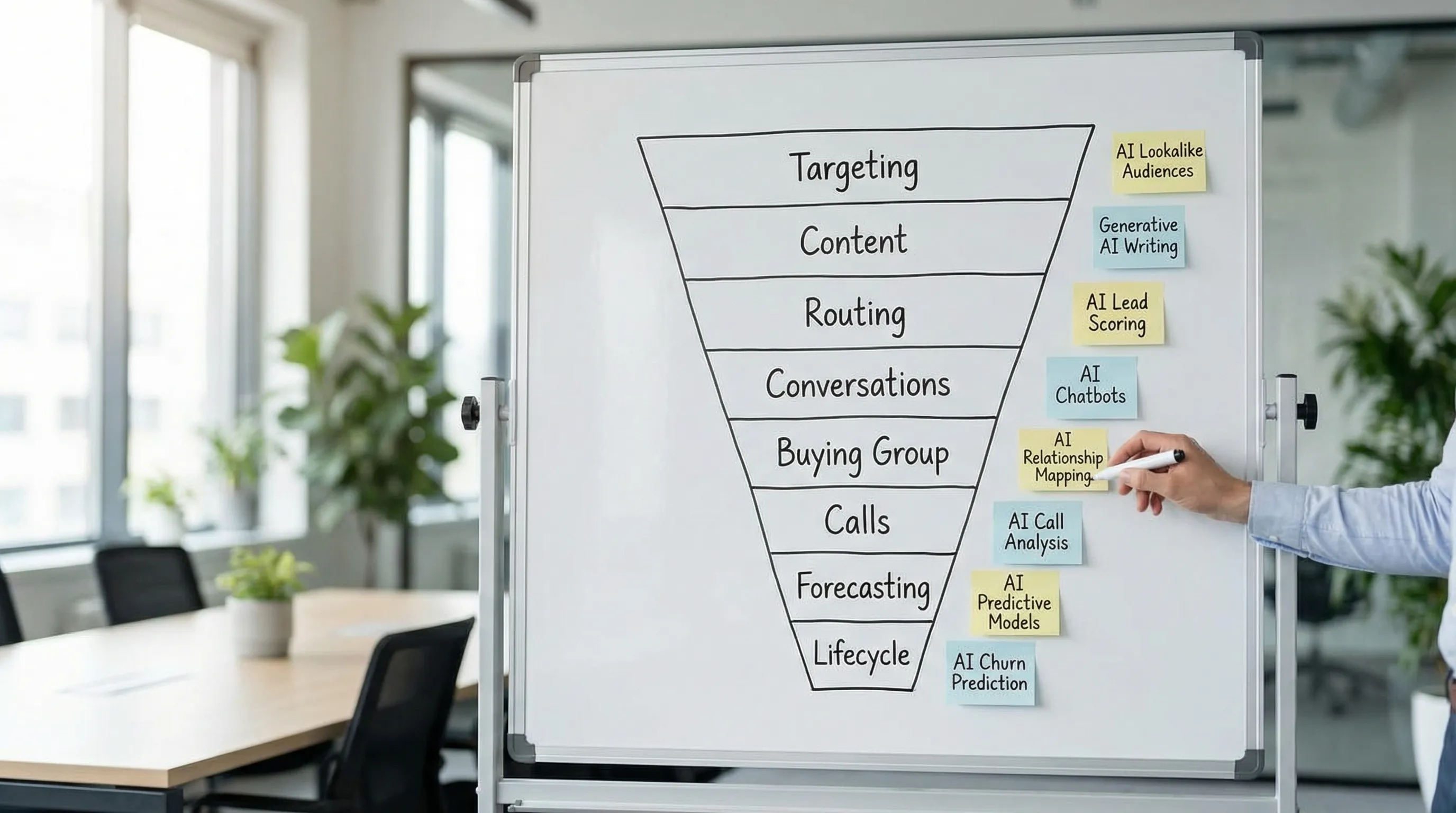

Practical, battle-tested examples of how teams apply AI across targeting, content, routing, conversations, forecasting, and lifecycle to drive measurable.

AI in sales and marketing is no longer a “cool experiment” category. It is showing up as faster response times, cleaner qualification, more relevant messaging, and better forecasting. The teams getting value are not “doing AI” everywhere, they are applying it to specific jobs with clear inputs, guardrails, and success metrics.

Below are real, practical examples you can steal, whether you run demand gen, SDR, RevOps, or you own the full funnel.

Industry evidence: “Our research suggests that a fifth of current sales-team functions could be automated.” — McKinsey & Company.

Source: McKinsey

What “AI in sales and marketing” actually means (in practice)

Most revenue teams use a blend of three AI approaches:

- Generative AI: creates or transforms content (email drafts, ad copy variants, call summaries, objection responses).

- Predictive AI: estimates outcomes (likelihood to convert, likelihood to churn, forecast risk).

- Conversational AI and agents: carries out actions in a workflow (routing, follow-up, booking meetings, updating fields), often with human oversight.

A useful way to evaluate any example is to ask:

- What is the job to be done? (For example, “qualify replies consistently”)

- What are the inputs? (CRM fields, conversation text, firmographics, intent signals)

- What decision does it make, or action does it take?

- What do humans still own? (Strategy, exceptions, quality control, high-stakes conversations)

Real examples across the revenue funnel

The examples below are intentionally specific. They include where they fit (marketing or sales), what the AI does, and what you should measure.

| Example | Where it shows up | What the AI does | What to measure |

|---|---|---|---|

| 1) ICP matching and list building | Marketing + SDR | Finds accounts and contacts that match your ICP and filters out low-fit | ICP coverage, reply rate by segment, meetings held rate |

| 2) Personalized content variants | Marketing | Generates on-brand copy variants and landing page messaging by persona | CTR, CVR, CAC by segment, lift vs control |

| 3) Speed-to-lead routing | Marketing ops + SDR | Routes and prioritizes leads using fit, intent, and recency | Speed-to-first-meaningful-touch, MQL to SQL rate |

| 4) LinkedIn conversation qualification | SDR | Runs 1-to-1 conversations, asks qualifying questions, books meetings | Qualified conversation rate, meeting held rate, AE acceptance |

| 5) ABM buying group expansion | Marketing + SDR + AE | Suggests additional stakeholders, recommends who to contact next | Accounts with 2+ engaged personas, opp creation rate |

| 6) Call and meeting intelligence | Sales | Summarizes calls, tags themes, drafts follow-ups | Follow-up time, opportunity progression, win rate |

| 7) Forecast risk detection | RevOps + Sales | Flags deal risk using activity and conversation signals | Forecast accuracy, slip rate, stage conversion |

| 8) Lifecycle and expansion triggers | Marketing + CS + Sales | Predicts churn risk, suggests expansion timing and messaging | Retention, expansion pipeline, NRR |

1) ICP matching and list building that sales actually trusts

What it looks like: A marketer or SDR defines an ICP (industry, size, tech stack, triggers). AI helps build lists by pulling firmographic data, identifying likely buyer roles, and removing obvious non-fit accounts (or flagging them for a different motion).

Why it works: Manual list building breaks at scale, and “bigger lists” usually make quality worse. AI helps you keep the list tight while still moving fast.

How to measure it:

- Reply rate and positive reply rate by segment

- Qualified conversation rate (or SQL rate) per 100 accounts

- Meeting held rate (not just booked)

Common pitfall: Treating enrichment as truth. AI-assisted enrichment is probabilistic. Keep a “confidence” mindset and build a feedback loop: when sales rejects leads, capture why and use it to refine the ICP filters.

2) Persona-specific content and creative variants without brand drift

What it looks like: Marketing uses AI to generate multiple ad or email variants tailored to different personas (CFO vs RevOps vs SDR manager). The key is that the AI is constrained by:

- A brand voice guide

- A list of allowed claims (no invented customer results)

- Approved proof points (case studies, benchmarks, screenshots)

Why it works: Relevance wins, but writing dozens of variants is expensive. Generative AI lowers the cost of iteration, so you can test more and learn faster.

How to measure it:

- Lift vs a control (your current best-performing version)

- Conversion rate on the next step (form submit, demo request, “reply with interest”), not only CTR

- Downstream quality (MQL to SQL rate, SQL to opportunity rate)

Common pitfall: Optimizing for engagement while degrading lead quality. If AI creates “too broad” messaging, you can get cheaper clicks and worse pipeline. Pair top-of-funnel metrics with quality gates.

3) Speed-to-lead routing powered by fit, intent, and recency

What it looks like: When a lead hits your CRM (from a form, event scan, inbound LinkedIn, webinar), AI helps:

- Classify the lead’s likely intent level

- Route it to the right queue (hot lane vs nurture lane)

- Recommend the first action (call now, personalized email, LinkedIn follow-up)

This is where AI supports marketing ops and SDR leadership: the system enforces consistency when humans are busy.

Why it works: Speed-to-lead is one of the most consistently cited drivers of conversion for inbound motions. Even without perfect scoring, getting to “first meaningful touch” faster is a reliable lever.

For background on why response speed matters, see Harvard Business Review’s research on lead response time.

How to measure it:

- Speed-to-first-meaningful-touch (not just “time to first activity”)

- Lead to MQL conversion rate and MQL to SQL conversion rate

- SLA adherence by segment

Common pitfall: Routing based on a single score with no explanation. Sales adoption increases when you can show “why” a lead was prioritized (recent activity, strong fit, explicit intent).

4) LinkedIn conversation qualification that feels human (and stays controlled)

What it looks like: Instead of blasting sequences, AI manages 1-to-1 LinkedIn conversations at scale:

- Starts with a personalized opener based on profile and context

- Responds quickly when prospects reply

- Asks lightweight qualifying questions

- Books a meeting when the prospect is ready

- Escalates to a human when stakes are high or the thread is sensitive

This is where conversational AI shines because LinkedIn is a dialogue channel, not a “touchpoint” channel.

Why it works: The biggest bottlenecks in outbound are often (1) slow responses to replies, (2) inconsistent qualification, and (3) too much SDR time spent on low-fit threads. AI can handle the repetitive back-and-forth while humans focus on high-value moments.

How to measure it:

- Qualified conversation rate (replies that meet your criteria)

- Meeting booked rate and meeting held rate

- Human override rate (how often reps step in), paired with quality outcomes

Common pitfall: Letting AI run without clear escalation rules. If you deploy conversation automation, make sure you have:

- Defined qualification criteria

- Tone and safety policies

- Human override controls

Kakiyo is purpose-built for this specific job: managing autonomous LinkedIn conversations from first touch through qualification and meeting booking, with controls like A/B prompt testing, scoring, analytics, and conversation override. If your team is exploring this motion, start at the product overview on kakiyo.com.

5) ABM buying group expansion (multi-threading without chaos)

What it looks like: AI reviews an account’s CRM notes, engaged contacts, and conversation themes, then suggests:

- Missing roles in the buying group (security, finance, ops)

- The next best persona to approach

- A tailored message angle for that persona

Why it works: Most B2B deals are group decisions. Marketing often engages one champion, sales often talks to one evaluator, and deals stall because no one systematically expands coverage.

For context on buying groups and complex B2B journeys, see Gartner’s work on B2B buying behavior.

How to measure it:

- Accounts with 2+ engaged stakeholders

- Time from first engaged contact to opportunity creation

- Opportunity progression (stage conversion) and stall rate

Common pitfall: Confusing “more contacts” with “more progress.” Track whether multi-threading correlates with stage movement and meeting quality.

6) Call and meeting intelligence that improves execution (not just notes)

What it looks like: AI summarizes calls, extracts next steps, tags objections, and drafts follow-up emails. Some teams also use AI to identify patterns across calls (competitor mentions, pricing objections, missing personas).

Why it works: Reps forget details, follow-ups are delayed, and insights rarely make it back to marketing. Summaries and tags reduce admin work and make insights searchable.

How to measure it:

- Follow-up sent time (median time post-call)

- Opportunity stage progression rate within 7 to 14 days

- Common objections by segment (as an enablement input)

Common pitfall: Treating summaries as ground truth. Use AI outputs as drafts and signals, not as a legal record. In regulated industries, define what can be stored and for how long.

7) Forecast risk detection using leading indicators (not gut feel)

What it looks like: AI flags deals that are likely to slip based on patterns such as:

- Long gaps since last meaningful customer interaction n- No engagement from key roles

- Repeated rescheduling

- Weak mutual plan signals in notes

This is often more valuable than a single “close probability” field because it drives action (who to contact, what to fix).

Why it works: Forecasts fail when they rely on subjective stage definitions and inconsistent rep updates. Risk detection tied to observable behavior is easier to operationalize.

How to measure it:

- Forecast accuracy over time (use a consistent method like rolling backtests)

- Slip rate by stage

- Save rate after risk interventions (when a flagged deal is successfully recovered)

Common pitfall: Label leakage. If your model uses fields that are updated after the fact (or contain outcome hints), it will look great in testing and fail in production. RevOps should pressure-test inputs.

8) Lifecycle and expansion triggers that align marketing, sales, and CS

What it looks like: AI monitors product usage signals, support tickets, renewal dates, and engagement to:

- Predict churn risk

- Trigger a save motion

- Identify upsell timing (new team adoption, usage ceilings)

- Recommend messaging for the next touch

Why it works: Lifecycle marketing often runs on fixed cadences (“send the renewal email 90 days out”), but customers do not behave on fixed cadences. Triggers tied to real usage and intent tend to perform better.

How to measure it:

- Retention and renewal rate

- Expansion pipeline created from triggers

- Net revenue retention (NRR)

Common pitfall: Over-automating sensitive customer situations. If a customer is escalating a support issue, the “upsell suggestion” should be suppressed. Build suppression rules and human review for high-risk accounts.

A simple scorecard for proving AI value (without vanity metrics)

If you want AI examples to survive budget scrutiny, attach them to funnel outcomes and leading indicators.

| Funnel area | Leading indicators to track weekly | Outcome metrics |

|---|---|---|

| Targeting and outbound | ICP coverage, connection acceptance, reply rate | Qualified conversation rate, meetings held |

| Inbound and routing | Speed-to-first-meaningful-touch, SLA adherence | Lead to MQL, MQL to SQL |

| Qualification | Qualification rate per reply, override rate | AE acceptance, meeting to opportunity |

| Pipeline | Stakeholder coverage, next-step compliance | Stage conversion, slip rate |

| Lifecycle | Activation milestones, usage health | Retention, expansion, NRR |

How to start (a safe, high-learning pilot in 30 days)

The fastest path to real results is to pick one workflow where speed and consistency matter, then run a measured pilot.

- Pick one narrow use case (for example, LinkedIn reply handling and qualification, or inbound routing).

- Define “qualified” in one paragraph so the AI and humans optimize for the same thing.

- Instrument a baseline for two weeks (control group). You need “before” data.

- Add guardrails: escalation triggers, disallowed claims, compliant data handling.

- Run A/B tests with one variable at a time (message angle, CTA, qualification question order).

- Review weekly with sales, marketing, and RevOps together, then change one thing.

If your current bottleneck is LinkedIn conversations that do not get handled quickly or consistently, a platform designed for autonomous LinkedIn qualification can be the simplest pilot, because it is naturally measurable (reply rate, qualified conversations, meetings booked and held). Kakiyo’s focus is exactly that: AI-managed LinkedIn conversations from first touch through qualification and meeting booking, with supervision and analytics built in.

FAQ

Where does AI actually help in sales?

AI helps most when it reduces repetitive work, surfaces better signals, and improves execution speed. It is strongest in research, qualification support, follow-up, and workflow automation.

What are the limits of AI in sales?

AI still depends on clean inputs, good process design, and human oversight. It can accelerate bad workflows just as easily as good ones.

How should teams evaluate AI sales tools?

Evaluate tools by workflow fit, data quality, implementation friction, and measurable business outcomes. A feature-rich tool is not automatically the right tool for your motion.

Does AI replace SDRs or sales reps?

Usually no. In most teams, AI reshapes the role by taking over repetitive execution while humans stay responsible for judgment, positioning, and relationship depth.