Business Development Rep Google Cloud: Scorecard

Practical, interview-ready hiring scorecard for Business Development Representatives selling to Google Cloud—includes competencies, scoring rubric.

Hiring a Business Development Representative (BDR) for Google Cloud is not the same as hiring a generalist outbound rep.

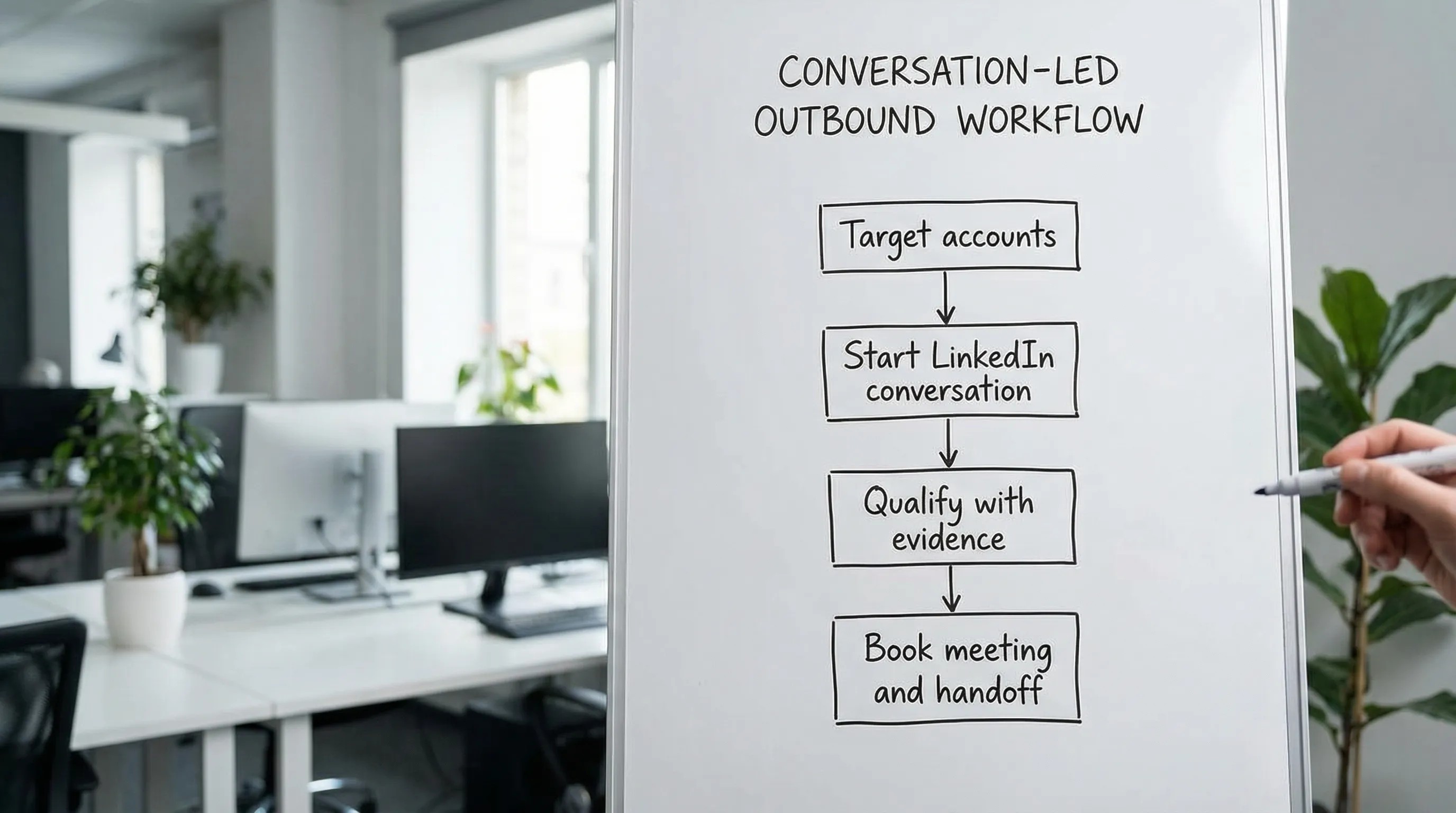

In most cloud motions, the buyer is evaluating risk (security, reliability, compliance), technical fit (workloads, architecture), and internal alignment (finance, IT, data teams) long before they agree to a meeting. That means the best BDRs are not “pitchy.” They are precise: they pick the right accounts, open conversations with credible context, qualify in-thread without interrogating, and hand off meetings that AEs actually accept.

A hiring scorecard is how you make that repeatable.

This guide gives you a practical, interview-ready hiring scorecard specifically for Google Cloud aligned roles (Google Cloud direct, partners, and vendors selling to Google Cloud buyers). You can copy it into your ATS, use it to calibrate interviewers, and use it to protect quality when you scale.

Industry evidence: Salesforce Research reports that sales reps spend only 28% of their week actually selling, with the rest consumed by deal management, data entry, and other non-selling work.

Source: Salesforce State of Sales

What you are really hiring for (the outcome, not the activity)

Before competencies, lock the outcome. Otherwise every interviewer “votes” based on vibes.

For a Google Cloud BDR, the clean outcome is:

- AE-accepted meetings with the right buyer group

- That occur within a defined ICP slice (industry, company size, workloads)

- With a clear next step and evidence captured (why now, why us, what problem)

If your scorecard doesn’t explicitly predict AE acceptance, it will drift toward vanity metrics (connection accepts, reply counts, meeting set regardless of quality).

A helpful internal rule: if a meeting gets rejected by the AE, the BDR should be able to point to the evidence they captured and explain why it looked meeting-worthy at the time.

The Google Cloud context that changes what “good” looks like

Google Cloud deals often involve:

- Technical stakeholders (platform engineering, security, data, architects)

- Cloud migration or modernization programs (multi-quarter, multi-team)

- Tool sprawl and vendor overlap (native GCP services vs ISVs vs SI partners)

- Procurement constraints (commitments, partner funding, existing agreements)

So a strong Google Cloud BDR needs enough cloud fluency to be credible, without pretending to be a solutions architect.

A good working definition is:

- They can speak clearly about categories like infrastructure, data platforms, security, AI/ML, and app modernization.

- They can ask risk-reducing questions (current stack, constraints, timelines, blockers).

- They avoid deep technical debates and instead earn the right to bring an AE or specialist in.

If you want candidates to ramp faster, point them at role-relevant learning paths like Google Cloud Skills Boost and ask what they learned from it in the interview.

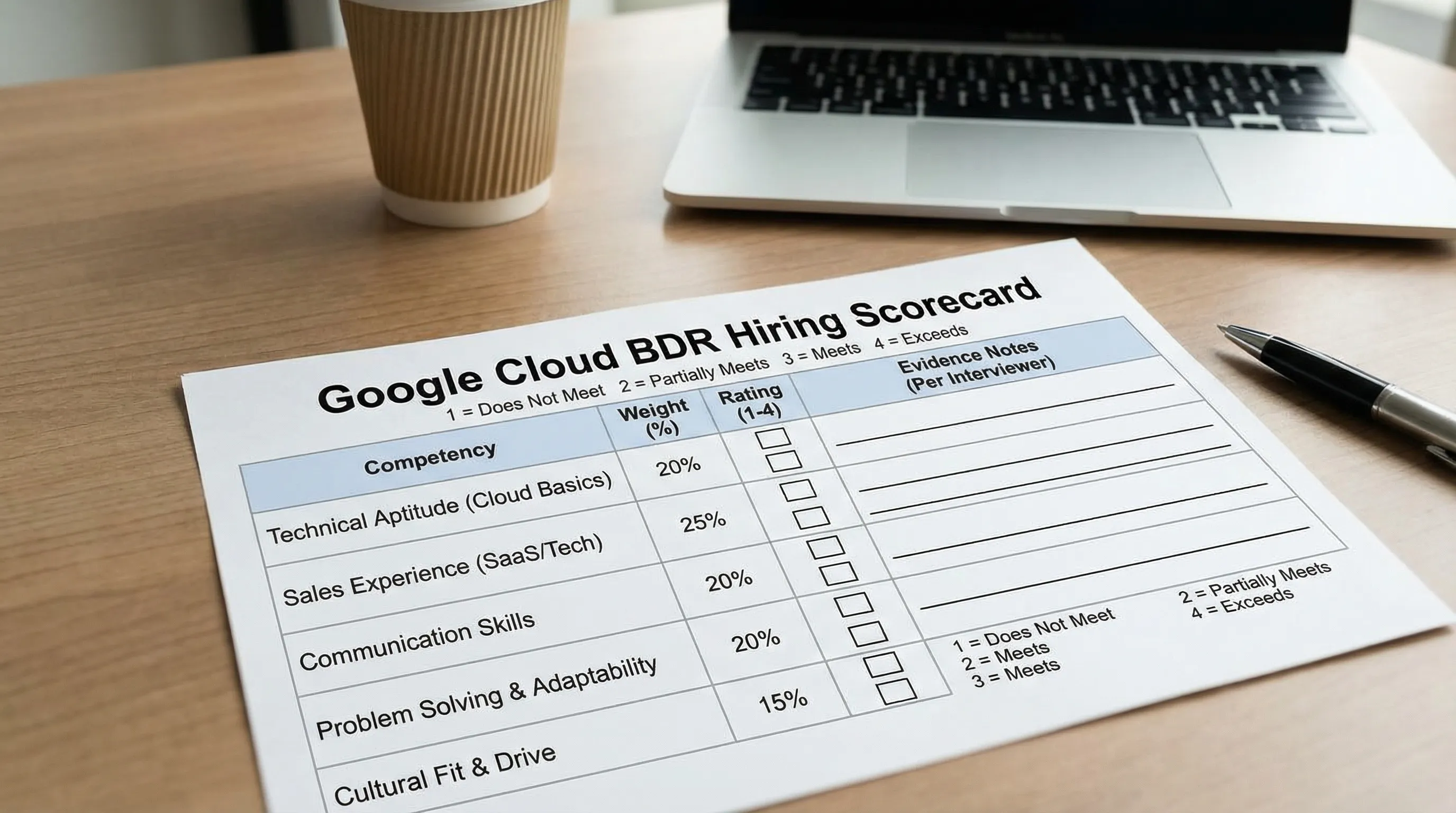

Business Development Representative Google Cloud: hiring scorecard (copy-ready)

Use the scorecard below as your single source of truth. Keep it behavior-based and observable.

Scoring scale

Use a simple 1 to 4 scale (avoid 1 to 10, it creates fake precision):

- 1 (No evidence): could not demonstrate the competency

- 2 (Developing): understands conceptually, weak execution or shallow examples

- 3 (Strong): repeated proof, solid judgment, clear examples

- 4 (Exceptional): consistently high bar, teaches others, shows strong pattern recognition

Core competencies and weights

| Competency (Google Cloud BDR) | Weight | What “strong” looks like (observable) | What to listen for (evidence) |

|---|---|---|---|

| ICP thinking and account selection | 15% | Can narrow ICP slices and explain tradeoffs | Chooses specific workloads, segments, triggers, disqualifiers |

| Cloud fluency (buyer-relevant, not overly technical) | 10% | Speaks credibly about GCP-related initiatives | Can explain what changed, why it matters, who cares |

| Conversation-first outreach (LinkedIn and email) | 15% | Writes short, specific messages that earn replies | Permission-based openers, relevant proof, clear micro-CTA |

| In-thread qualification and next-step control | 15% | Qualifies without interrogation, captures evidence | Asks 1 good question, summarizes, proposes a crisp next step |

| Multi-threading and buying group mapping | 10% | Expands within accounts without spamming | Names roles, sequencing logic, internal referral asks |

| Experimentation and measurement | 10% | Runs small tests, learns fast, avoids random activity | Defines hypothesis, measures micro-conversions, iterates |

| Process discipline and CRM hygiene | 10% | Leaves an audit trail that helps the AE | Notes with fit/intent/next step, clean stage definitions |

| Coachability and collaboration | 10% | Accepts feedback, improves quickly, partners well | Specific examples of change after coaching |

| Integrity, compliance, and brand safety | 5% | Respects buyer experience and platform rules | Stop rules, opt-out respect, avoids automation abuse |

Total: 100%

Interview loop design (fast, fair, and predictive)

A scorecard works best when your loop is structured to collect the evidence the scorecard requires.

Here is a practical loop that fits most teams, including partners and high-growth ISVs.

| Stage | Duration | Interviewer | What you are testing | Artifact to capture |

|---|---|---|---|---|

| Recruiter screen | 20 to 30 min | Recruiting | Motivation, communication, baseline fit | Notes on segment experience and career intent |

| Hiring manager deep dive | 45 min | SDR/BDR manager | ICP thinking, coachability, process discipline | 2 past examples: best meeting, worst meeting (why) |

| Writing test (async) | 20 to 30 min | Manager or enablement | Message quality, relevance, clarity | 2 LinkedIn DMs + 1 follow-up + 1 disqualifier note |

| Live role-play | 30 min | Future peer or manager | Qualification, objection handling, next step control | Scored transcript + “next step” summary |

| Cross-functional (AE or SE light) | 30 min | AE (and optionally SE) | Handoff quality, collaboration, credibility | What an AE would need to accept the meeting |

Keep the loop consistent. If you improvise questions per candidate, you will over-index on charisma and under-index on repeatable skill.

Work sample exercises that actually predict performance

A Google Cloud BDR role rewards two abilities: selecting the right context and running a clean conversation.

Below are three work samples that test those abilities without requiring the candidate to “know your product.”

Work sample 1: account and trigger brief (tests ICP judgment)

Prompt: “Pick one target account from this list. Write a 6 sentence brief: why this account, why now, who to contact first, and one disqualifier.”

What you are looking for:

- They choose a realistic entry point (role and team)

- They use a plausible trigger (hiring, migration, compliance event, initiative)

- They include a disqualifier (so they do not qualify everything)

Scoring rubric

| Dimension | 1 | 2 | 3 | 4 |

|---|---|---|---|---|

| Specificity | Generic cloud buzzwords | Some detail, still broad | Clear workload, clear team | Sharp wedge, crisp “why now” |

| Buyer mapping | “CTO” only | 1 role plus vague others | 2 to 3 roles and sequence | Buying group logic, internal champion strategy |

| Disqualification | None | Weak or irrelevant | Clear and realistic | Clear plus how they would confirm it |

Work sample 2: LinkedIn thread simulation (tests conversation control)

Prompt: “Here is a prospect reply: ‘We are already on AWS, not looking.’ Continue the LinkedIn conversation for two turns to clarify and earn a next step.”

Strong candidates:

- Do not argue

- Ask one clarifying question that creates a fork (exit or advance)

- Offer a low-friction next step (short call, quick comparison, or resource)

You can compare this to your team’s standards for safe LinkedIn outreach. If you need a reference cadence to align interviewers, see Kakiyo’s guide on cold prospecting on LinkedIn.

Work sample 3: handoff packet (tests meeting quality)

Prompt: “Assume the prospect agreed to meet. Write the internal handoff note you would send the AE.”

Require these fields:

- Who: title, team, influence

- Why now: trigger, initiative, urgency

- Problem statement: in buyer’s words

- Current state: what they use today

- Next step: meeting goal, attendees, agenda

This aligns with evidence-based qualification and avoids “thin” meetings that get rejected. If your org already uses an evidence standard (fit, intent, next step, recency), keep the same language in the scorecard so hiring reinforces your operating model.

The “Google Cloud BDR” competency interview questions (with what good answers contain)

Use fewer questions, go deeper. You are trying to extract patterns, not trivia.

ICP and targeting

Ask: “Walk me through the last segment you owned. How did you decide which accounts were worth your time?”

Good answers include:

- A narrow ICP slice (not “mid-market tech”)

- Clear disqualifiers

- A prioritization method (signals, whitespace, initiatives)

Cloud fluency

Ask: “Explain a cloud initiative you prospected into. What was the business reason, and who cared most?”

Good answers include:

- A business driver (cost, speed, reliability, compliance)

- A buyer group (engineering + security + finance)

- Humility about technical depth (“I bring in an AE/SE when…”)

Qualification

Ask: “Tell me about a meeting you disqualified. What did you learn and how did you handle the thread?”

Good answers include:

- They can say “no” quickly

- They protect the buyer experience

- They capture the reason (so targeting improves)

If you want a shared internal definition for what counts as qualified, standardize it. Kakiyo’s post on Sales SQL definition and criteria is a useful model for turning ‘qualified’ into enforceable exit criteria.

Experimentation

Ask: “What is one outreach test you ran that changed your approach?”

Good answers include:

- Hypothesis, variable, measurement

- What they stopped doing

- How they avoided scaling the wrong thing

Red flags that matter in cloud outbound

You can prevent most bad hires by naming the red flags explicitly.

| Red flag | Why it fails in Google Cloud motions | What to probe |

|---|---|---|

| Over-indexing on volume | Cloud buyers punish generic outreach | Ask for their stop rules and how they protect deliverability and brand |

| “I book meetings, AEs qualify” | Creates AE rejection and pipeline mistrust | Ask how they define a qualified meeting and what evidence they capture |

| Fake technical confidence | Erodes trust quickly with technical buyers | Ask them to explain something simply, then ask where they would pull in an SE |

| No disqualifiers | Everything looks like a lead, nothing converts | Ask for 3 reasons they would exit an account early |

| No experimentation cadence | Stagnant performance, random activity | Ask what they A/B test and what metric decides a winner |

How to evaluate AI usage (without rewarding spam)

In 2026, many candidates will say they “use AI” for outreach. That is not a differentiator. How they use it is.

What you want:

- AI to reduce busywork (drafting, summarizing threads, suggesting variants)

- Human judgment to control targeting, qualification, and stop rules

- Evidence capture so handoffs remain auditable

What you do not want:

- Unsupervised automation that sends generic messages at scale

- No clear ownership of mistakes

Ask directly: “What tasks do you automate, what do you never automate, and what guardrails do you use?”

If your team uses AI to run or assist LinkedIn conversations, name your expectations. Tools like Kakiyo are designed to manage personalized LinkedIn conversations at scale with qualification and meeting booking, but your BDR still needs to set the inputs, review outcomes, and intervene when a thread gets sensitive.

Calibration: how to make the scorecard usable across interviewers

A scorecard fails when:

- Interviewers do not share definitions

- People score on “likability”

- Feedback is not tied to evidence

Run a 15 minute calibration before you start interviewing.

Align on:

- What counts as a 3 for qualification

- What counts as a 3 for message quality

- Your minimum bar for “cloud fluency” (buyer-relevant language, not certifications)

Then enforce one rule: no score without a quote or artifact.

Examples:

- “Gave a clear disqualifier: ‘If you are locked into a 3-year commitment and cannot evaluate alternatives this year, I would pause.’”

- “Converted objection by asking one clarifying question and offering a low-friction next step.”

What “good” looks like in the first 30 days (so you hire for it)

You are not hiring for immediate pipeline. You are hiring for a predictable ramp.

In a Google Cloud BDR role, a strong first 30 days looks like:

- A documented ICP slice with disqualifiers

- A repeatable LinkedIn conversation starter and 2 follow-ups

- A clear definition of a qualified meeting and a handoff template

- A weekly experiment cadence (one variable, one learning)

If you already run a conversation-led qualification system, align this to your existing operating rhythm so the new hire does not learn one thing in interviews and another on the floor.

Where Kakiyo fits (if LinkedIn is a core channel)

If your BDR team is LinkedIn-first and you want to scale without sacrificing quality, the constraint is usually not effort, it is conversation throughput with governance.

Kakiyo is built to autonomously manage personalized LinkedIn conversations from first touch through qualification to meeting booking, with controls like prompt creation, A/B testing, scoring, override control, and analytics. In hiring, that changes what you should emphasize:

- Candidates who can design good prompts and qualify in-thread

- Candidates who can interpret performance data and iterate

- Candidates who protect buyer experience with stop rules and clear escalation

If you want your hiring process to match your outbound reality, this scorecard helps you select BDRs who can run high-quality LinkedIn conversations, with or without AI assistance.

For related operating guidance, you may also want to align with your internal definitions for qualification and acceptance. Kakiyo’s resources on lead qualification processes and proof-based qualification are useful references for making “qualified” auditable.

FAQ

What does a strong SDR or BDR actually own?

A strong SDR or BDR owns early-stage pipeline creation: targeting, first conversations, qualification, and clear handoff. The role is not just about booking meetings, but booking the right meetings.

Which skills matter most in modern sales development?

Prospecting judgment, messaging clarity, qualification discipline, and CRM hygiene matter more than raw call volume. In modern teams, AI literacy and channel fluency also matter.

How should teams evaluate SDR performance?

Use a mix of activity, conversion, and pipeline quality metrics. Strong teams look beyond booked meetings to show rates, qualification accuracy, and accepted pipeline.

How long does it take for a new rep to become productive?

Most reps need a ramp period before they consistently generate qualified pipeline. The fastest ramp comes from clear playbooks, tight feedback loops, and strong qualification criteria.