AI Sales Enablement: A 30-Day Rollout

A practical 30-day rollout to treat AI as a revenue operating system — definitions, guardrails, prompts, QA, and measurement to drive more qualified.

AI sales enablement has a reputation problem. Too many teams buy a shiny tool, run a one-hour training, then wonder why meeting quality drops or reps quietly revert to “manual everything.” The fix is not more AI, it is a rollout that treats AI like a new operating system for revenue, with definitions, guardrails, coaching, and measurement.

This 30-day rollout is built for RevOps, Sales Enablement, SDR leaders, and founders who want a practical outcome: more qualified conversations and more meetings booked (without sacrificing brand safety or trust). It assumes a conversation-led motion, especially LinkedIn, where replies are messy, context matters, and rigid sequencers break.

Industry evidence: “Our research suggests that a fifth of current sales-team functions could be automated.” — McKinsey & Company.

Source: McKinsey

What “AI sales enablement” actually means (and what it is not)

AI sales enablement is the system that equips reps to use AI to execute the workflow you want, consistently:

- The right targets (ICP and buying groups)

- The right conversations (personalized, compliant, on-brand)

- The right decisions (qualification, routing, next steps)

- The right proof (auditable evidence that explains why a lead is qualified)

It is not:

- “Here’s a chatbot, go prospect faster.”

- Auto-spam at higher volume.

- Replacing seller judgment in high-stakes moments (pricing, procurement, negotiation).

A useful way to frame the change is: enablement defines the plays and quality bar, AI helps execute the plays at scale, humans supervise and improve the system.

The 30-day rollout outcomes (what you should have by Day 30)

By the end of 30 days, aim to have:

- A single, written definition of “qualified conversation” and “meeting-ready” (with examples and non-examples)

- A pilot running on a controlled segment, with versioned prompts/templates and A/B testing

- A lightweight QA process (sampling, override rules, escalation)

- A weekly metrics scorecard that shows funnel movement (not just activity)

- A repeatable training and certification path for SDRs

- A clear decision on whether to scale, iterate, or stop

If you are using Kakiyo, the goal is to have autonomous LinkedIn conversations running under supervision, with AI-driven qualification, prompt testing, scoring, and analytics configured so your SDR team spends more time on high-value opportunities.

Before you start: pick the rollout shape (one lane, one ICP slice)

Your fastest path to ROI is almost never “enable everything.” Pick one lane where conversation quality matters and where you can measure lift in under a month.

Good starting lanes:

- LinkedIn-first outbound for one persona (for example: VP Sales in SaaS, Head of RevOps in services)

- Expansion or partner recruitment (fewer targets, higher personalization)

- Event follow-up (time-bound intent, clearer CTAs)

Bad starting lanes:

- A broad “all segments, all SDRs, all channels” launch

- Any motion where you cannot define qualification clearly (yet)

If you need a model for a conversation-led funnel, Kakiyo’s post on turning outreach into booked meetings is a solid reference point: SDR Sales: From Outreach to Booked Meetings.

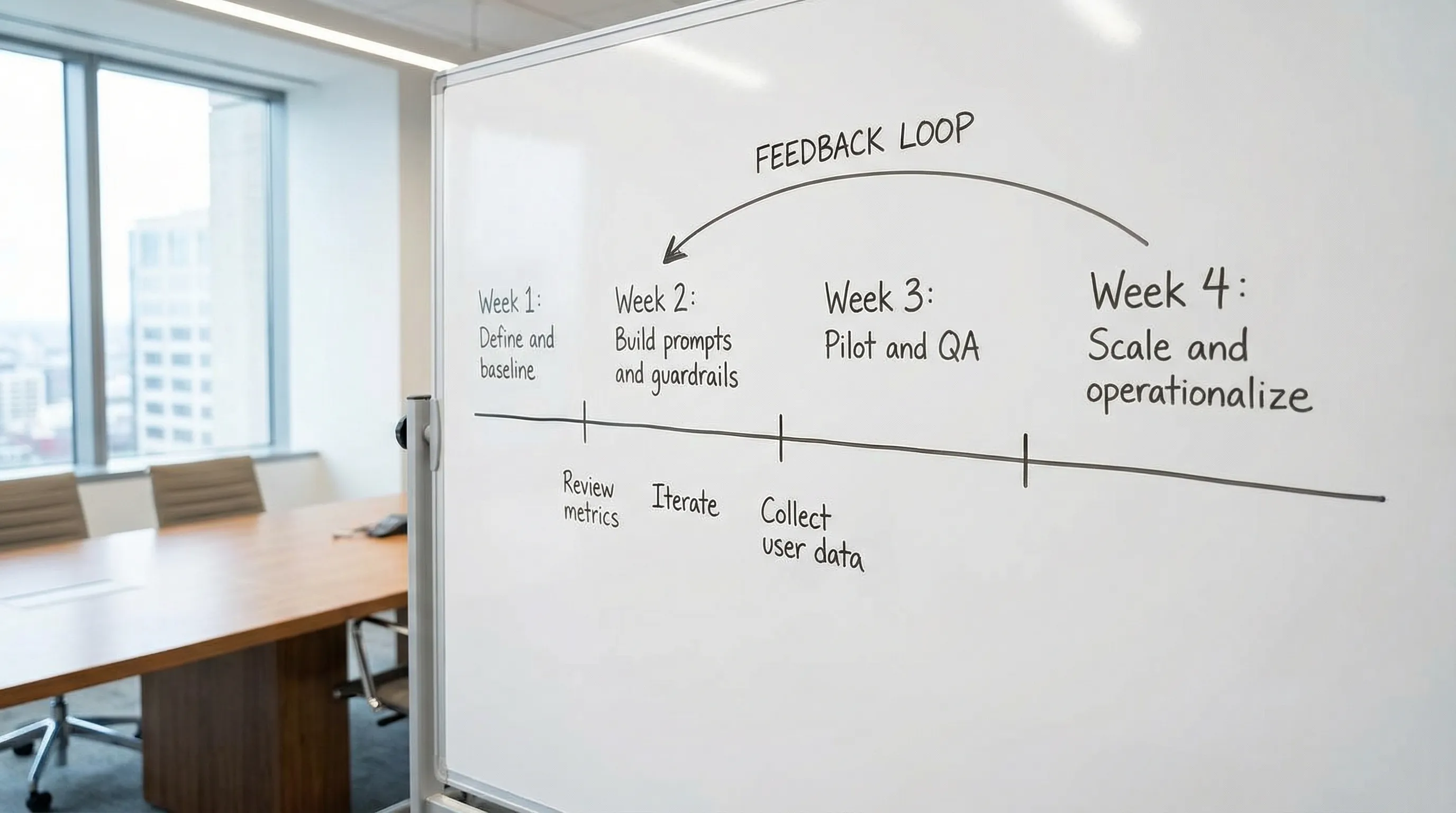

The AI sales enablement rollout plan (30 days)

Day 1-3: Align on outcomes, definitions, and constraints

This is the part teams skip, and it is why pilots fail.

Decide the outcome you will optimize for. Not “more replies.” A better outcome is usually one of these:

- Qualified conversations per week

- Meetings booked per week

- Meetings held rate

- AE acceptance rate (or meeting-to-opportunity conversion, if your cycle is short)

Lock a written qualification definition. Keep it auditable. The easiest structure is Fit + Intent + Evidence (what did they say or do that proves it). If you want deeper examples and benchmarks, see: What Is a Sales Qualified Lead? Examples and Benchmarks.

Set constraints (brand, compliance, risk). In practice, this means:

- Topics the AI should never claim (pricing, legal terms, guarantees)

- Tone boundaries (no manipulation, no invented “we saw you…” signals)

- Human approval requirements (for example: escalations when a prospect asks about security)

- Data handling rules (what can be pasted into prompts, where logs are stored)

For a governance baseline, the NIST AI Risk Management Framework is a helpful, practical reference for mapping risks to controls.

Day 4-7: Baseline the funnel and instrument measurement

You cannot prove lift if you cannot see the funnel.

Baseline for the same segment you will pilot. Pull 2-4 weeks of history if you have it. If you do not, baseline during Week 1 by running your current approach as control.

Track micro-conversions, not just the end result. In conversation-led outbound, early-stage rates predict whether your changes are working.

| Funnel stage | What it means in practice | Why enablement cares |

|---|---|---|

| Connection acceptance rate | Targeting plus first-touch relevance | Proves ICP and message match |

| Reply rate | Any reply, positive or negative | Detects whether you are earning attention |

| Positive reply rate | Replies that open a door | Reduces “busy work” threads |

| Qualified conversation rate | Threads that hit your Fit/Intent bar with evidence | Predicts meeting quality |

| Meetings booked | Calendar event created | The output most teams over-index on |

| Meetings held | Prospect shows up | Filters out low-quality booking |

| AE acceptance (if applicable) | AE confirms meeting is worth taking | Aligns SDR output to revenue reality |

If you want a ready weekly scorecard structure, see: AI Sales Metrics: What to Track Weekly.

Week 2 (Day 8-14): Build the enablement assets (prompts, plays, QA)

Week 2 is where AI sales enablement becomes real. You are creating the “sales brain” that the AI executes.

Asset 1: ICP slice and targeting rules

Write a one-page ICP slice for the pilot:

- Segment (industry, employee size, region)

- Titles to target

- Disqualifiers (who not to message)

- 3-5 high-signal triggers (hiring, new product line, new territory, tool change)

If your team uses LinkedIn Sales Navigator, align list-building rules here so targeting stays stable during the pilot.

Asset 2: A prompt library with versioning

Treat prompts like sales assets, not personal rep magic.

At minimum, you need:

- First-touch prompt (connection note or first DM style)

- Reply-handling prompt (how to respond to common reply types)

- Qualification prompt (the questions you ask, in the right order)

- Meeting booking prompt (how to suggest scheduling without pushing)

With Kakiyo, this maps well to customizable prompt creation, industry-specific templates, and A/B prompt testing.

Asset 3: Qualification rubric and “evidence packet”

Your enablement team should publish a short rubric that answers:

- What counts as qualified?

- What evidence must exist in the thread?

- What is an automatic disqualifier?

You can implement this as a scorecard. Keep it simple enough to coach.

| Category | What to capture | Evidence examples (in-thread) |

|---|---|---|

| Fit | ICP match, role, account profile | “I lead RevOps for a 200-person SaaS team” |

| Intent | Current priority, active search, dissatisfaction | “We are evaluating tools this quarter” |

| Constraints | Timing, stakeholders, blockers | “Need buy-in from security and finance” |

| Next step | Clear, agreed action | “Yes, send times for next week” |

With Kakiyo, an intelligent scoring system can help standardize this, as long as you still audit the underlying evidence.

Asset 4: QA and override rules (human-in-the-loop)

Create rules that make reps feel safe using AI, and make leadership confident it will not go off the rails.

A practical starting policy:

- Daily QA sample: review a small set of threads (for example 10-20) across prompt variants

- Override triggers: pricing, procurement, legal, security, competitor comparisons, angry replies, “remove me” requests

- Escalation owner: one person on point (SDR lead or enablement) to decide prompt edits within 24 hours

Kakiyo supports this style of rollout well if you use its conversation override control plus centralized real-time dashboard to supervise what is happening.

Week 3 (Day 15-21): Launch the pilot (controlled volume, fast feedback)

Week 3 is execution plus learning.

Keep the pilot tight

A strong default:

- 1 ICP slice

- 1-2 SDRs (or a small pod)

- 2 prompt variants max per play

- A fixed daily volume cap

The win condition is not volume, it is a measurable lift in qualified conversations and meeting quality.

Run a daily operating rhythm

In Week 3, you are building muscle memory. Do short, consistent check-ins.

| Cadence | Meeting length | Purpose | Output |

|---|---|---|---|

| Daily QA huddle | 15 minutes | Review samples, tag failures, pick one fix | Prompt edits or rule tweaks |

| Mid-week metric check | 20 minutes | Compare variants and segments | Keep or kill one variant |

| End-of-week debrief | 30-45 minutes | Decide what scales, what changes | Week 4 rollout plan |

If you already have a structured SDR operating model, align this pilot rhythm to it so it does not feel like “extra work.”

Measure what changed, not just what happened

When results move, attribute the movement to a specific change:

- Targeting change (list rules)

- Prompt change (version)

- Qualification change (questions asked)

- Timing change (follow-up windows)

This is where advanced analytics and reporting matter, especially if you slice by prompt version and persona.

Week 4 (Day 22-30): Scale, document, and operationalize

Week 4 is where enablement turns a pilot into a capability.

Step 1: Convert what worked into a “v1 playbook”

Your playbook should include:

- ICP slice definition

- Prompt versions that won (and what they are for)

- Qualification rubric and disqualifiers

- QA process and override rules

- Handoff requirements (what must be in the CRM or in the thread summary)

Keep it short. A playbook nobody reads is not enablement.

Step 2: Add training and certification

AI tools change behavior. Behavior changes need coaching.

A simple certification that increases adoption:

- SDR can explain the qualification rubric in plain English

- SDR can identify at least 5 “override triggers” correctly

- SDR can show 3 example threads that meet the quality bar

- SDR can run a basic A/B test responsibly (one variable)

If you want a structured onboarding approach that fits a LinkedIn-first motion, adapt your certification artifacts from: Sales Development Representative Onboarding Checklist.

Step 3: Expand scope carefully

Scale in one dimension at a time:

- Add SDRs, keep ICP constant

- Or add a second ICP slice, keep SDR pod constant

- Or add one new play (for example: event follow-up), keep everything else stable

This prevents the common “everything changed, we learned nothing” problem.

The enablement control panel: what to monitor weekly

Your weekly review should answer two questions:

- Are we creating more qualified conversations and held meetings?

- Are we doing it safely and consistently?

Here is a compact “control panel” that blends outcomes with governance.

| Metric | Direction | What it tells you | Common fix when it breaks |

|---|---|---|---|

| Qualified conversation rate | Up | Targeting and qualification are working | Tighten ICP, adjust qualification questions |

| Meetings held rate | Up | Booking quality is real | Add confirmation step, improve expectation-setting |

| AE acceptance rate | Up | Downstream trust is improving | Improve evidence packet and routing rules |

| Time-to-first-response | Down | You are capturing intent faster | Increase coverage, refine reply-handling prompts |

| Override rate (AI to human) | Stable | Governance is functioning | If too high: prompt gaps, if too low: risk of over-autonomy |

| Disqualification reasons | Concentrated | You are learning what “not ICP” means | Update disqualifiers and targeting |

Kakiyo’s dashboard and analytics are a natural place to centralize these if LinkedIn conversations are your primary lane.

Common rollout failure modes (and how to prevent them)

Failure mode: You optimize for replies, not revenue outcomes

Replies are easy to inflate. Qualified conversations and held meetings are harder to game.

Fix: tie prompt experiments to a downstream KPI, and keep a written qualification bar.

Failure mode: You let everyone write their own prompts

This creates “prompt drift,” inconsistent brand voice, and uncoachable variance.

Fix: publish a versioned prompt library owned by enablement, allow suggestions but require review.

Failure mode: Nobody trusts the qualification

If AEs think meetings are low quality, the project dies even if top-of-funnel metrics look good.

Fix: require an evidence packet (Fit, Intent, proof from the thread), and review AE rejection reasons weekly.

Failure mode: You skip governance because the pilot “looks fine”

Governance is most important when the pilot is working, because that is when you scale.

Fix: formalize override triggers, QA sampling, and escalation ownership before increasing volume.

Where Kakiyo fits in this 30-day rollout

If your primary enablement problem is managing LinkedIn conversations from first touch through qualification and booking, Kakiyo is designed for that layer of work:

- Autonomous LinkedIn conversations so you can handle more threads without losing context

- AI-driven lead qualification to standardize what “qualified” means in practice

- A/B prompt testing and industry templates to improve messaging with discipline

- Intelligent scoring plus a real-time dashboard so leaders can supervise outcomes and quality

- Conversation override control to keep humans in charge when stakes are high

- Analytics and reporting to connect prompt versions to funnel results

If you want a deeper look at how conversation-led AI differs from step-based sequencers, this comparison is helpful: Sales AI Tools vs Legacy Sequencers.

A practical next step

If you want this rollout to feel manageable, do one thing today: write your pilot qualification definition on one page, then pick the single ICP slice where a better conversation would immediately improve pipeline quality.

When you are ready to operationalize the workflow on LinkedIn, explore how Kakiyo supports supervised, scalable conversation management at Kakiyo.

FAQ

Where does AI actually help in sales?

AI helps most when it reduces repetitive work, surfaces better signals, and improves execution speed. It is strongest in research, qualification support, follow-up, and workflow automation.

What are the limits of AI in sales?

AI still depends on clean inputs, good process design, and human oversight. It can accelerate bad workflows just as easily as good ones.

How should teams evaluate AI sales tools?

Evaluate tools by workflow fit, data quality, implementation friction, and measurable business outcomes. A feature-rich tool is not automatically the right tool for your motion.

Does AI replace SDRs or sales reps?

Usually no. In most teams, AI reshapes the role by taking over repetitive execution while humans stay responsible for judgment, positioning, and relationship depth.