AI Sales Enablement: Prompts, Plays, Governance

Guide to AI sales enablement covering prompts, playbooks, governance, and rollout practices that make SDR performance repeatable.

AI can help SDR teams move faster, but speed is not the same as enablement. Enablement is what makes performance repeatable: clear plays, consistent language, measurable outcomes, and guardrails that protect your brand.

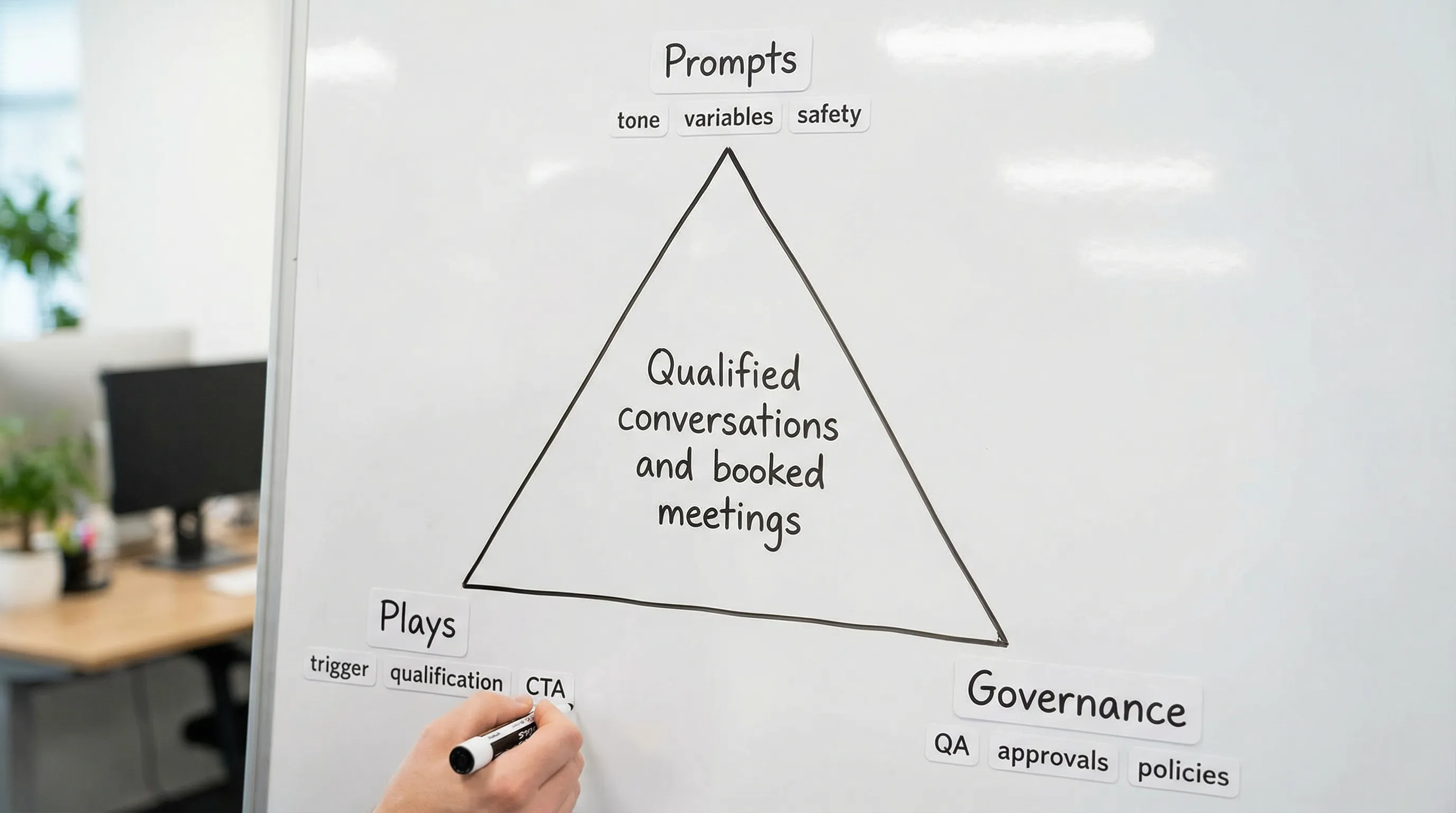

In 2026, “AI sales enablement” is less about giving reps a chatbot and more about building a system that can run, learn, and stay safe. That system usually comes down to three things:

- Prompts: how you instruct the AI to behave in your context.

- Plays: how you package prompts into repeatable motions tied to outcomes.

- Governance: how you prevent brand, compliance, and data mistakes as you scale.

This guide shows how to build each one in a way that works for conversation-led outbound (especially LinkedIn) without turning your motion into prompt chaos.

Industry evidence: “Our research suggests that a fifth of current sales-team functions could be automated.” — McKinsey & Company.

Source: McKinsey

What AI sales enablement actually means (and what it is not)

Traditional enablement ships “assets” (scripts, talk tracks, sequences) and hopes they get used. AI changes the surface area: your team is no longer only enabling humans, you are also enabling an automated agent that can speak on your behalf.

That creates two new requirements:

- A shared operating language so the AI and humans qualify the same way.

- A feedback loop where you can prove which prompts and plays create qualified outcomes, not just more activity.

If you want a deeper operating model for AI in sales teams, Kakiyo’s broader playbook is a helpful companion: Sales and AI: A Practical Team Playbook.

The “Prompt to Play” progression (the mistake most teams make)

Most teams start with prompts because prompts feel easy to produce. The predictable failure mode is a growing document full of clever prompt variants that nobody can evaluate.

A better progression is:

- Define the outcome you care about (for outbound, often “qualified conversation” and “meeting held”, not “meetings booked”).

- Define what “qualified” means in observable evidence.

- Design prompts that elicit that evidence in-thread.

- Bundle prompts into plays that specify when to use them and how to measure them.

- Add governance so changes are controlled and performance is explainable.

If you already run a weekly scorecard, you will recognize this as an enablement-friendly loop. For a metric framework you can borrow, see AI Sales Metrics: What to Track Weekly.

Prompts: build a library that sales can trust

A good enablement prompt library is not a folder of “best messages.” It is a set of controlled behaviors with inputs, outputs, and testable hypotheses.

Design principle 1: Separate policy, persona, and task

If your AI sometimes sounds amazing and sometimes sounds risky, it is usually because the prompt mixes everything together.

Split prompts into three layers:

- Policy (non-negotiables): compliance, tone boundaries, privacy rules, no false claims, no pressure.

- Persona (voice and style): concise, buyer-first, specific, region-specific spellings.

- Task (what to do now): write the next message, ask one qualifying question, propose scheduling.

This makes governance easier because policy changes rarely, while task prompts change often.

Design principle 2: Force evidence, not vibes

Enablement prompts should explicitly request evidence-based outputs.

Instead of “qualify the prospect,” require:

- Fit evidence (role, company, environment)

- Intent evidence (pain, project, timeline)

- Conversation evidence (what they said, what they asked, what they refused)

That structure aligns with how high-quality teams define and stabilize qualification across channels.

Design principle 3: Use variables like an enablement system, not a copywriter

Your prompts should be parameterized. Variables make the difference between “a great message” and a scalable program.

Common variables for LinkedIn conversations:

- ICP segment (industry, size, maturity)

- Buyer persona (VP Sales, RevOps, founder)

- Trigger (hiring, funding, tool change, job post)

- Value hypothesis (one sentence)

- Proof point (case study category, metric, or credible signal)

- Conversation stage (first touch, reply, qualification, booking)

A prompt template you can standardize (and govern)

Below is a prompt skeleton enablement teams can maintain. It is written to be auditable: it tells the model what it can and cannot do, and it constrains output length.

ROLE: You are writing a LinkedIn message for an SDR team.

POLICY (non-negotiable):

- Do not claim we used the prospect’s product or worked with their company unless explicitly provided in inputs.

- Do not mention scraping, automation, or “AI” unless the prospect asked.

- Keep it respectful, no guilt, no pressure.

- If inputs are missing, ask for the minimum missing info instead of guessing.

PERSONA:

- Tone: concise, professional, buyer-first.

- Style: 1 short paragraph, max 280 characters unless asked otherwise.

TASK:

Write the next LinkedIn message for the stage: {stage}.

Goal: earn the next micro-commitment (reply, confirm fit, or accept a meeting).

INPUTS:

- Prospect persona: {persona}

- Company: {company}

- Trigger: {trigger}

- Value hypothesis: {value_hypothesis}

- Proof: {proof}

- Last message from prospect (if any): {prospect_last_message}

OUTPUT:

- Provide 1 message only.

- Include exactly 1 question.

- Avoid buzzwords.Build “prompt pairs” for A/B testing

Enablement teams should rarely test more than one variable at a time. A practical pattern is to define “prompt pairs” where everything stays constant except one lever:

- Lever: opener type (trigger-first vs. problem-first)

- Lever: proof type (peer proof vs. quantified proof)

- Lever: CTA type (micro-yes question vs. calendar ask)

You can then tie performance to a controlled change, rather than debating tone.

Plays: turn prompts into repeatable motions

A “play” is a wrapper around prompts that answers enablement questions:

- When do we run this?

- Who is it for?

- What is the micro-conversion?

- What evidence must we capture?

- What disqualifies?

- What is the next action if it works?

The play card format (enablement-friendly)

Use a standardized play card so your team can review, approve, and coach consistently.

| Play card field | What to include | Why it matters |

|---|---|---|

| Name | Short, searchable label | Enables reuse and reporting |

| Segment | ICP + persona + geography | Prevents generic messaging |

| Trigger | Observable signal | Creates relevance without heavy personalization |

| Conversation objective | Reply, qualify, book | Keeps prompts aligned |

| Qualification rule | What evidence is required | Prevents “meeting spam” |

| Disqualifiers | Clear “no” conditions | Protects SDR time and buyer experience |

| Safety notes | Tone, compliance, taboo topics | Reduces brand risk |

| Metrics | Micro-conversions + quality | Makes improvement objective |

| Escalation | When a human must take over | Controls autonomy |

Three plays that work well for LinkedIn-first outbound

These examples are intentionally operational, not copy-and-paste templates. Your message wording should adapt to your market and proof.

Play 1: Trigger-first micro-yes (earn permission)

When: the prospect has a clear trigger (new role, hiring, funding, tool stack change).

Objective: get a reply that confirms relevance.

Prompt behavior: mention the trigger, offer a single value hypothesis, ask a micro-yes question.

Qualification evidence to capture: whether the trigger maps to an active initiative, or is irrelevant.

Why it works: it is specific without pretending you “know” them, and it invites correction.

Play 2: Reply-to-reply qualification turn (convert interest into evidence)

When: the prospect replies with something like “maybe,” “curious,” “send info,” or a light objection.

Objective: collect one piece of fit or intent evidence without interrogating.

Prompt behavior: reflect their message in one line, ask one narrow question (for example, current process, priority, or timing), and offer an easy out.

Qualification evidence to capture: problem presence, ownership, or timeframe.

This is the turning point where many teams either push a calendar too early or get stuck chatting. If you want a funnel model for “reply to booked meeting,” Kakiyo’s SDR Sales: From Outreach to Booked Meetings lays out the micro-conversions.

Play 3: Low-friction booking with buyer control

When: you have at least minimal fit and intent evidence.

Objective: book a meeting that holds.

Prompt behavior: propose a short time box, confirm who should attend, and give two options (times or “should I send a link?”).

Qualification evidence to capture: attendee match (right role), clear agenda, and confirmed problem context.

Measurement tip: track “meetings held” and “AE acceptance” alongside “meetings booked” to avoid optimizing for low-quality calendar fills.

Governance: scale the upside without scaling the risk

Governance is not a legal formality. It is how you protect three assets:

- Your brand voice and reputation

- Your data (and the prospect’s trust)

- Your pipeline quality (so AEs believe the meetings)

If you operate on LinkedIn, you also need to align to platform terms and acceptable use. Review the current LinkedIn User Agreement and related policies, then codify what your team will and will not do.

A practical governance stack (what to write down)

Keep governance simple enough that teams actually follow it:

- Messaging policy: tone boundaries, prohibited claims, escalation rules.

- Data policy: what customer/prospect data can be used in prompts, retention rules, and who has access.

- Experimentation policy: what can be tested without approval, what requires review.

- Quality policy: sampling rules, failure categories, and remediation.

For broader AI risk guidance, the NIST AI Risk Management Framework is a useful reference for thinking in terms of governance, measurement, and monitoring.

Define autonomy levels (so “human in the loop” is real)

“Human in the loop” fails when nobody knows when the loop is required.

Set explicit autonomy levels for your AI-managed conversations, for example:

- Level 0 (assist only): AI drafts, humans send.

- Level 1 (supervised send): AI sends within constraints, human reviews samples daily.

- Level 2 (supervised outcomes): AI manages threads, humans step in on specific triggers.

Your enablement documentation should list the triggers that force escalation (pricing questions, legal/compliance questions, negative sentiment, competitor traps, or any time the prospect asks for a human).

RACI: who owns prompts, plays, and safety

Governance becomes real when ownership is unambiguous.

| Area | Responsible | Accountable | Consulted | Informed |

|---|---|---|---|---|

| Prompt library updates | Enablement ops | Head of SDR/RevOps | Legal/Compliance, Brand | SDR team, AEs |

| New play rollout | SDR manager | Head of Sales | Marketing, RevOps | AEs |

| Experiment design | Enablement ops | RevOps | SDR managers | Leadership |

| QA sampling and labeling | SDR QA lead | Head of SDR | Enablement | Team |

| Escalation handling | SDR on duty | SDR manager | AE owner | RevOps |

The minimum viable QA loop

You do not need a full-time QA department to run governed AI outreach. You do need consistency.

A lightweight weekly loop:

- Sample a fixed number of conversations per play and per prompt version.

- Label failures into a small set of buckets (off-brand tone, wrong persona, premature CTA, missing qualification evidence, policy violation).

- Make exactly one change per week per play (or you will not know what caused improvement).

The 30-day enablement rollout (prompts, plays, governance)

Week 1: Lock outcomes and definitions

Decide what you are optimizing for. For most teams, the enablement north star is not “reply rate,” it is qualified conversations that turn into held meetings and accepted opportunities.

Write down:

- ICP segments you will support first

- The evidence required to call something qualified

- Disqualifiers and routing rules

Week 2: Build the first prompt library and 2 to 3 plays

Start small. A prompt library that supports two segments and three stages (first touch, reply handling, qualification) is already enough to pilot.

Add play cards and make them coachable:

- What to do

- What good looks like

- What to avoid

Week 3: Pilot with controlled volume and real measurement

Pick one segment and run a controlled test.

Use A/B testing only where you can attribute outcomes to a single change (one prompt lever, one play change). If your team needs a disciplined test structure, Kakiyo’s Cold Outreach: A 7-Day Testing Plan is a solid template.

Week 4: Scale what wins, and formalize governance

Only scale plays that produce quality outcomes.

Document:

- Autonomy level and escalation triggers

- Approval workflow for prompt changes

- Weekly QA ritual and reporting

This is also the time to create an onboarding module so new SDRs learn the system, not just the scripts.

Where Kakiyo fits in an AI sales enablement system

Kakiyo is designed for teams that run LinkedIn-first, conversation-led outbound and want to scale without losing control. Based on the product description, it supports the core enablement needs discussed here:

- Autonomous LinkedIn conversations from first touch through qualification and booking

- Customizable prompt creation and industry-specific templates to standardize what “good” looks like

- A/B prompt testing to measure lift from controlled changes

- Intelligent scoring to route and prioritize conversations

- Override control, plus dashboards and analytics so humans can supervise performance and quality

If your current stack is strong at sending sequences but weak at handling replies and qualification on LinkedIn, this is the gap conversation-layer tools aim to solve.

Frequently Asked Questions

What is AI sales enablement? AI sales enablement is the system for making AI-assisted selling repeatable and safe, usually via governed prompts, standardized plays, and measurable quality outcomes.

What is the difference between a prompt and a play? A prompt is an instruction to the AI. A play is the operational wrapper around prompts, defining when to use them, what evidence to collect, how to measure success, and when to escalate.

How do you prevent AI outreach from hurting your brand? Use a non-negotiable policy layer in prompts, define escalation triggers, run weekly QA sampling, and require approvals for changes that affect tone, claims, or compliance.

How should we measure AI-driven outbound beyond reply rate? Track micro-conversions through to quality: qualified conversation rate, meetings held, AE acceptance, meeting-to-opportunity conversion, and time-to-first-meaningful-touch.

Do we need legal and compliance involved? If your AI can message prospects, yes, at least to define prohibited claims, data handling rules, and escalation paths, and to ensure alignment with platform terms.

Want governed AI-managed LinkedIn conversations that still feel human?

If you are building an AI sales enablement system and want to scale LinkedIn conversations without losing qualification quality, Kakiyo is built for that conversation layer: personalized outreach, in-thread qualification, meeting booking, A/B prompt testing, scoring, analytics, and human override.

Explore Kakiyo here: kakiyo.com and see how a prompt-and-play system can run at scale with governance baked in.